Standards Make the World

David Lang

. . . there is a popular fallacy about this business of setting standards. It is the belief that it is inherently a dull business. One of the reasons that I am glad to see the present history appear is that I believe it will help to dissipate this misunderstanding. Properly conceived the setting of standards can be, not only a challenging task, but an exciting one.

—Vannevar Bush, 1966

from the prologue to Measures for Progress:

A History of the National Bureau of Standards

As far as Eric Stackpole is concerned, marine robotics has stalled out amidst an unfinished revolution. He should know—he started it.

A decade ago, Stackpole created a prototype of a small remotely operated vehicle (ROV), built using off-the-shelf components and an acrylic housing that could be made with a simple, laser-cut design file. I was with him in the garage. With little money and big dreams of a low-cost device, we shared the project online in the hopes we’d get help from others. We called it OpenROV, an open source underwater robot. Our goal was a robot that could dive to depths of 100m while streaming back real-time video for less than $1,000. If we had tried to buy a commercially available equivalent at the time, the price would have been closer to $30,000.

OpenROV launched on Kickstarter and kicked off a wave of innovation in the close-knit world of marine robotics.1 Predictably, many of the pros wrote it off as a toy or a hobby project, which was true when it started. But we steadily improved the original design and it got better quickly.

The project struggled and succeeded in ways we didn’t intend. Being open source helped us meet people and gather a few contributions, but not nearly as many as we’d hoped. And compared to the speed of open source software development, the iteration cycles were painfully slow. Moving physical atoms proved logistically more complex than sending digital bits.

Despite challenges, OpenROV was effective in changing the economics of small ROVs. Thousands of people bought and built our initial kits. Dozens of projects and companies started from those ideas, and you can now find several capable ROVs that meet our initial specs. Small ROVs got good and cheap, just as we’d hoped and not at all how we’d planned.

Now Eric Stackpole wants to try again. In June, without fanfare or warning, he posted three photos of an autonomous underwater vehicle (AUV) project in a newly created forum with the hope of, again, setting off a chain reaction.2 To the uninitiated, the design might look like any other prototype of an underwater robot, but it’s a major departure from industry norms.

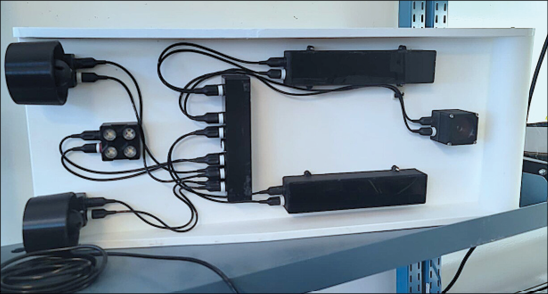

The typical AUV is shaped like a torpedo, a sleek hydrodynamic shape with the electronics and sensors all packed tightly inside and sealed off from the unforgiving ocean environment. Stackpole’s design, on the other hand, is exposed. Every component is its own modular part, and they’re all connected through a standardized interface which we’re calling Bristlemouth.3 The design is a glimpse of what’s possible—a point of departure for the next wave of invention.

Our journey from prototype builders to product manufacturers and eventually to protocol designers was revelatory. Once we turned serious attention to developing a technical standard, a new creative canvas opened. Standards-making, it turns out, is high-leverage design, ripe with the ability to change the technological playing field in ways that no individual firm can on its own. It’s like finding the control room of our modern world.

Prototype AUV

Underbelly showing the modular components in place using Bristlemouth connectors

Components with Bristlemouth connectors

We ditched idealism for pragmatism. Our learning curve—from fumbling through an open source hardware project to participating in the creation of an interoperability standard—is instructive.

Others have come to the same epiphany from different directions. Protocol-first thinking is having a moment. More engineers are turning to standards-making as a way to shape (and reshape) the technological infrastructure we all rely on. The momentum is focused in the digital realm, but there’s every reason to think bigger: hardware, biotechnology, infrastructure.

The playbook is stylistically different from standards-making of the past century. The pendulum is swinging back from an era of Big Standard—international organizations, multinational corporations, and entrenched players—to a more entrepreneurial game played by startups, decentralized organizations, and even individuals. The next generation of standardizers are coming into their own, driven by the example set by open source software and a growing sense of agency.

This essay charts the path I wish we would have taken. It starts with an overview and history of standards—the fundamentals. Next is a deep dive on the Internet Protocol, paying special attention to how the ARPANET team changed standards-making and influenced open source software. Then it compares the challenges faced by open source hardware to the success of another “disruptive” model of standards-making, a lesson on how ignoring the fundamentals can limit effectiveness. Lastly, it touches on what happens when things go right: the joy of standardizing.

And it’s worth getting right. When the stars align—when the cultural cachet of standards-making rises into a definitive social movement—technological development can be supercharged. And if done well, the changes enable everyone to build atop a new, higher ground.

Scope and Scale

Standards are everywhere. These nearly invisible rules establish trust between engineers and give rise to commerce, industry, and possibilities. Even now, just by reading these words, you are relying on dozens, if not hundreds, of guiding technical standards. Some of them might be familiar, like the World Wide Web (WWW) or the Internet Protocol (IP) that delivers packets of information to your device. What about the standards that went into manufacturing that device, like the allowable Radio Frequency Interference (RFI) and Electromagnetic Interference (EMI) limits for that device? What about the shipping and transportation standards that brought it across oceans? Would you know where to find the spec? What about the group that created them? Or who maintains them?4

The rabbit hole of questioning extends to almost every object in our lives. Technical standards form the foundation of our built environment. They’re often mistaken as limits or boundaries to creativity, which can happen when they’re poorly constructed. But if they’re well designed and effectively implemented by engineers on the front lines, standards can become enabling technologies: the Internet, shipping containers, time.

Startups and companies get all the headlines, but the tools we use to cooperate—standards and protocols—drive an equal measure of civilizational progress.

Despite their importance, standards often go unnoticed. Most people, if they’re aware of them at all, think they’re boring and overly bureaucratic. This is partially due to the word itself—standard. It sounds basic and it’s broad enough to cause constant confusion. A standard could refer to anything from the Unicode system that approves new emojis to the bacteria levels allowed in pasteurized milk. Standards end up as outcomes—agreed-upon measurements, terms, and rules—but they always involve a process, too. For the purposes of this essay, standards refer to the spec and standards-making to the process.

The term protocol is also diluted from overuse. The word can be used to describe everything from the steps of scientific experimentation to royal etiquette. In this essay, I’m mostly referring to protocols in the way that computer scientists use the term: a specific set of rules and instructions for handling and exchanging information on digital networks–standardized protocols. In that sense, protocols are a specific subgenre of standards.

The names are just a small reason that standards get overlooked. A bigger issue is first impressions.

The popular portrayal of standards is through coverage of “standards wars” where similar implementations compete for supremacy in the marketplace: VHS vs. Betamax, Blu-Ray vs. HD DVD, AC vs. DC. These famous examples get attention because of the public nature of the competition and the investment on either side, but standards wars are relatively uncommon.5 Standards-making is always a negotiation, with competing ideas and trade-offs on multiple sides, but the majority of those disagreements and differences are settled in committees and small groups through defined processes well before they ever become an open conflict in the market. Still, the visibility of standards wars remains, and it makes the whole endeavor seem corporate and dangerous.

The other common interaction with standards is as a boundary or constraint. People encounter them on the way to some other goal. For example, a product designer runs into several safety and interoperability standards through the course of making a new product. An architect is bound by building codes in designing a new home. Even managers are guided by standards—ISO 9000—when they try to add quality assurance measures to company processes. Then, when anyone starts asking why the standard is the way it is, they find a committee or a consortium or some other process that seems impenetrable.

Understandably, this is where most people stop thinking about standards. They adhere to their basic legal and technical obligations and they move on.

This is unfortunate. A deeper understanding of standards-making—and how that process has evolved over time—creates a healthy respect for the scale of influence. Standards are some of the most powerful tools we have to affect our world. And here’s the kicker: you can make them.

Standards are not divine rights. They are made and remade by (usually small) groups of people and projected into the world through various means and with varying effectiveness. And that process is dynamic. Standards-making is something that anyone can engage in, even though almost no one thinks to do it. But they should. You should. Too often, better societal outcomes —overcoming technological bottlenecks or ensuring tools are safely deployed—are held back by poorly designed or missing standards.

Like any other language, developing this type of standards fluency starts with a new vocabulary.

A Standard Taxonomy

In Engineering Rules: Global Standard Setting since 1880, JoAnne Yates and Craig Murphy tell the long story of standards-making. They write almost spiritually about the role and mission of standardization entrepreneurs, the unsung heroes who convene and build consensus amongst engineers and organizations.6 They suggest viewing the process as “an entirely different realm with a very different logic from either commerce or politics, something that developed in response to the greater social complexity that accompanied the pressure toward the greater economic integration of industrial capitalism.”7

Neither state nor market, but essential to both. According to Yates and Murphy, the early standards worked for three main purposes: safety, interoperability, and performance. They use the history of the early standardization efforts of steam boilers (safety), screw threads (interoperability), and steel rails (performance) as examples of each. Exploding steam boilers on riverboats caused engineers and policymakers to rally around standards for design, construction, and maintenance. Screw threads were a matter of obvious convenience; standard designs would make lost screws easily replaceable by local shops. Steel manufacturers needed a way to quantify their durability and justify higher prices over iron rails, but they didn’t have an easy way to convince buyers. Performance standards—industry-wide assurances of quality—helped facilitate commerce.8 While the purposes are different enough to justify different names, they’re still all lumped into “standards.” The commingling of terms hides their utility.

The categories aren’t perfect. Some standards don’t fit squarely into these labeled boxes. There’s a genre of contractual standards that are almost-but-not-exactly interoperability or performance standards. The Simple Agreement for Future Equity (SAFE) note created by Y Combinator is an example.9 The partners at Y Combinator were frustrated by the unnecessary complexity and (sometimes) predatory nature of investment deal structures for early-stage startups, so they created a standardized document to simplify terms. They released it for free and open use by Y Combinator companies and beyond, and quickly reshaped the norms of startup investing.

Nevertheless, the idea of a taxonomy is a good start—a useful start. It sets up the first important question in standards-making: What’s the goal? Safety, interoperability, or performance?

The categorization of standards is relevant for many major challenges facing society. In order to make progress, you need to know which flavor of standard you’re dealing with. Take the debate and discussion around AI regulation and alignment, which is fundamentally a question about missing safety standards. Holden Karnofsky and Open Philanthropy recently put up a bounty to source more case studies of effective safety standards in hopes of gathering insight.10

Progress on climate change, too, is hampered by standards issues. Take the growing demand for carbon offsets. The carbon market cannot operate without a trusted mechanism for verification. The Guardian’s reporting on the recent analysis of the unreliable metrics of Verra, the world’s leading carbon standard, has rattled confidence and left the entire industry grasping for how to move forward.11 Verra is missing effective performance standards. And it’s not just the obvious cases of fraud where the problems arise. New industries like carbon dioxide removal, with hundreds of novel approaches and market entrants, are missing a set of performance standards to benchmark the technologies. Recognizing this shared challenge, a handful of industry leaders recently convened to outline the “Reykjavik Protocol” for measuring and verifying their impact.12 These entrepreneurs recognize the importance of strong, rigorous standards to underpin the work. Their collective success hinges on adoption.

Standards problems often manifest in this form, not as a tragedy of the commons, but as a failure of the commons to materialize in the first place. The economic forces placed on individual actors create just enough friction to leave the problem unsolved, even though addressing the issue would benefit everyone.

But how? The best way to learn how to work with standards is by studying how they’ve evolved over time.

How Standards are Made

Standards-making is not a recent phenomenon. Soon after science and engineering professionalized in the nineteenth century, the benefits of cooperation became evident.

Standards started as humble proposals within the emerging professional organizations of engineers and scientists. A famous example was a presentation by Joseph Whitworth to the Institution of Civil Engineers in 1841: A Paper on an Uniform System of Screw Threads.13 Whitworth was a respected engineer in England, known for his commitment to precision in planed surfaces and accurate measurement.14 He had grown concerned by the “great inconvenience” caused by the lack of consistency amongst the screws from different machine shops.15 To settle the matter, he collected all the available screws from around the country and compared them. His synthesis culminated in the paper and argument for uniformity.

Seeing the benefits across the Atlantic, William Sellers proposed an updated design to the Franklin Institute in Philadelphia in 1864.16 Again, the occasion was a paper and proposal to a group of engineering colleagues. Sellers’ main gripe was that Whitworth’s design was too difficult to make cheaply. Sellers’ design could be easily manufactured (and already was, conveniently, at his machine shop in Philadelphia) using a “relatively simple formula to calculate pitch for any diameter.”17

The effectiveness of these early standardization efforts set the stage for formal standards-making processes to emerge. And that formula has changed considerably over the years. Yates and Murphy separate the major standards movements into three distinct waves, each with unique operating modes.

The first wave occurred between the 1880s and 1920s.18 The standards-making process was mostly straightforward, mimicking what worked for Sellers and Whitworth: groups of interested parties made the case for a shared design and convinced others to join. Working groups of engineers, interested business leaders, and relevant government officials were formed to oversee their adoption. Organization followed function.

The gradual adoption of Greenwich Mean Time (GMT) is a good example, one that impacted the railroads and beyond. It started as a maritime tool as British mariners kept one chronometer at Greenwich time in order to keep track of their longitudinal position. The British railways adopted GMT in the 1840s to keep the trains running on time, and other uses in Britain quickly followed. Following the British lead, engineers and scientists representing American and Canadian railways met in 1883 to create a standard railway time across the continent along with established time zones based on GMT. The adoption and implementation by the railways set the stage for the International Meridian Conference in 1884, which formally established Greenwich as the prime meridian and set the standard for global, universal time.19 Pragmatism ruled the day.

Support of technical standardization coalesced into a recognizable social movement by the 1920s. Standards entrepreneurs like Charles Le Maestre, head of the British Engineering Standards Association, gained status and influence through their commitment to the common project. However, this was not usually a profession or job in itself. Rather, it was, according to Yates and Murphy:

largely made up of men who volunteered to work on technical committees because that was something they believed professional engineers should do to serve the public good.20

They had adopted an ethic and a commitment to cooperative design, and they were busy proselytizing the benefits to the engineering masses.

The world wars slowed their momentum. As countries focused inward they became wary of cooperation, but the standards pioneers had left a blueprint: network effects ruled. Le Maestre and others had shown the value of strong national standards bodies and provided a glimpse of how international standards could work. After WWII, the next generation of standards entrepreneurs picked up the baton and took the ideas much further. By the 1950s, the second wave had started and thrived between the 1960s and 1980s. Globalization was the story, and associations like the International Organization for Standardization (ISO) were formed to facilitate transnational adoption.

The momentum of ISO was buoyed by the emergence of global industries like air travel and freight shipping, as well as a convergence of political interests. The defining standard of the era—intermodal containers—literally connected the world.21 Developing countries saw standards adoption as the easiest path to international markets, whereas more powerful developed countries, like the U.S, recognized the importance of standards-setting as a way to sustain trade advantages. Most countries, from Japan to Sweden, fell in between, adopting the standards that made sense from a cost saving or political perspective.22 Standards had gone global, with all the associated network effects and layers of bureaucracy.

The third wave of standards entrepreneurs grew alongside the development of computers and computer networking. As engineers and companies quickly innovated, the need for standards outpaced the capacity of the international, multi-stakeholder consensus process, and another model emerged in response. Yates and Murphy called this a “consortium” model, where the parties most closely involved in development (companies, engineers, etc.) self-organized to create standards that worked for their purposes.23

I’d go further than Yates and Murphy on the delineation.24 The consortia-based model is really two separate stories: insiders and outsiders. On the one hand, the information age brought standardization into every major corporate playbook. Technology companies compete to control standards in order to induce network effects and win market share. Stephen Walli refers to this as the art of “commercial diplomacy.”25 This way of working is encapsulated in “The Art of Standards Wars,” written by the economists Carl Shapiro and Hal Varian in 1999—the white-hot center of the dot-com boom. The article is full of stories and tactics—preemption, migration paths, commoditized compliments, etc.—for starting and waging a standards war.26 The ideas were later expanded into a book, Information Rules, and Shapiro and Varian have gone on to serve influential roles at the U.S. Department of Justice and Google, respectively, in addition to their academic appointments.27

This conception of standards has dominated the corporate and regulatory landscape of the past 30 years. The recent news that Ford and GM are adopting Tesla’s electric vehicle charging standard is a good example.28 Tesla raced ahead to fill the market need, earning the right to set the spec, and now even their most ardent competitors are falling in line. It’s classic de facto standards-making.

But big company maneuvering isn’t the only standards story of the digital era. On the other end of the spectrum are the scrappy upstarts. I call the process “disruptive standards-making” because of the close resemblance to Clayton Christensen’s model of disruptive innovation where unassuming outsiders break through with fast-improving technology.29

Disruptive Standards Making

The Internet Protocol was the pioneering disruptive standard, even though DARPA didn’t set out to create a new type of standards-making process. The ARPANET program was meant to solve a practical problem in the cheapest way possible. Vint Cerf, a key member of the ARPANET program and Network Working Group team, understood the basic motivations of the agency, while also harboring an indifference to the formal networking protocol in development at ISO (which had formed the Open Systems Interconnection group, or OSI). From an interview with the Charles Babbage Institute in 1990:

The Internet, which was spawned out of this conglomeration of different packet technologies that DARPA initiated, has already had a pretty dramatic impact on both the military and the commercial world as far as I can tell. You can’t pick up a trade press article anymore without discovering that somebody is doing something with TCP/IP, almost in spite of the fact that there has been this major effort to develop international standards through the international standards organization, the OSI protocol, which eventually will get there. It’s just that they are taking a lot of time.30

Once again, like the original standards- makers, the organizational efforts follow an idea that is already working in practice. Disruptive standards-making falls somewhere on the spectrum between de facto and voluntary-consensus—elements of both strategies mixed with heavy doses of entrepreneurial hubris. It’s an attitude of “we’re doing this—are you coming?”

To be clear, the model I’m describing goes against the established norms—but not the ethos—of most standards-making bodies. The IEEE, for example, sets out clearly defined steps for creating a standard:

- Initiating the project

- Mobilizing the working group

- Drafting the standard

- Balloting the standard

- Gaining final approval

- Maintaining the standard31

Disruptive standards work by a different logic. It’s not posted on any official channels, but it follows:

- Get something working—a prototype, an integration, a protocol.

- Gain traction and adoption within the market.

- Backfill any committee and administration work needed to make it official within a standards body like the IEEE or ASTM. Or, like the Internet and Internet Engineering Task Force (IETF), roll your own governance.

It’s not about skirting the rules. The guidelines and best practices of the standards bodies are in place for a good reason: they work. For decades, these organizations have honed best practices around how to manage the disparate motivations within a working group. And their system still accounts for the vast majority of industrial standards. The new model for disruptive standards makes that process better by filling in gaps or jumpstarting the action in stagnant areas.

This brings up the second important question in standards-making: Who’s it for? And what’s the minimum viable buy-in needed to get a network of users started?

The Internet kicked off the next great era of standards-making.32 Even the Internet Engineering Task Force (IETF), the group responsible for managing the nascent Internet protocol, recognized the moment. David Clark’s famous address to the IETF in 1992, “A Cloudy Crystal Ball,” is remembered for the defiant credo of “rough consensus and running code,” but the full text of the slide shows the broader historical awareness (see figure).33

The World Wide Web would follow, starting with a small team of outsiders at CERN who got the damn thing working.34 This method of disruptive standards-making has caught on quickly amongst software developers. In fact, it has evolved into its own idea and philosophy: open source software and hacker culture. The Internet wasn’t the beginning of the idea, but it had an important congealing effect. In The Cathedral & the Bazaar, Eric Raymond charts the path from early computing to the hacker culture that emerged around open source software:

The first intentional artifacts of the hacker culture—the first slang lists, the first satires, the first self-conscious discussions of the hacker ethic—all propagated on the ARPAnet in its early years.35

Again, like the original standards-makers, an idealistic new ethic emerged to buoy the work. In many ways, they developed it from scratch based on what was simple and effective. For example, few open source developers seek approval before getting started. They see a need and proceed to fill it, whether by contributing to an existing codebase or starting anew, regardless if they are actively rebelling against legacy standards-making processes or blissfully unaware.

The history of RSS offers an anecdote of this permissionlessness. When the web was growing in popularity and the competition between Netscape and Microsoft was at a fever pitch, developers turned to content syndication as a way to attract publishers and attention. Small publishers and bloggers, like Dave Winer’s Scripting News, also recognized the value of stories being shared across the web and eagerly contributed to the development of the new standard. By the time the Netscape team released RSS 1.0 in 2000, a rift in the community had formed over how many features to include. Dave Winer released a competing implementation RSS 0.92 as a stripped-down alternative. The back and forth between Winer and Netscape went on until Winer and the UserLand team eventually released RSS 2.0 in 2002.36

This Darwinian model of “if you don’t like it, fork it” has become commonplace in open source. Perhaps unsurprisingly, Winer would go on to write one of the most useful guides and condensed “rules for standards-makers” in the digital era.37

By almost every metric imaginable, open source software is thriving. In 2022, GitHub released a report on the staggering influence.38 More than 90% of companies are relying on open source software in some way. More than 90 million developers are on the GitHub platform and they collectively made more than 400 million contributions to open source software projects in 2022 alone. And it’s big business, too. Roughly a third of Fortune 100 companies have dedicated open source program offices.

But more than any statistic, just ask any software developer if and how they use open source software. You’ll likely get an effusive endorsement of both the philosophy and a list of favorite projects.

The rise of open source software represents a full-on standards movement. Engineers build reputations through their contributions to the commons in the same way that the early standards entrepreneurs would gain status for their efforts. It’s prestigious work that raises the tide for all boats.

How Standardization Efforts Fail

Carl F. Cargill made a career as a standards theorist and consultant. He has written multiple books and journal articles about the art and science of standards-making. Scholarly but not overly academic, Cargill’s perspective was born from direct experience, as he spent his career as a practicing standards strategist at a variety of Silicon Valley companies like Sun, Adobe, and Netscape.

One of his most important contributions to the field was a paper that inverted a basic premise. Instead of asking what factors go into success, he approached the question from the opposite direction: Why Standardization Efforts Fail.39

The paper articulated a harsh reality: the main ways that standards efforts fail are not in the official standards-making process itself. The conceptualizing, writing, and implementing—all the formal steps listed on the IEEE guide—are only half of the story, maybe less. There are two other failure modes that were more likely to contribute to missing standards. The first is on the front end: a failure to launch a potential standardizing effort. Any number of problems can stall a project at this pre-conceptualization stage: a lack of enthusiasm from potential sponsors, the originator lacking charisma and vision, or even fear of antitrust or anti-competition accusations from market players. There’s also the possibility of flat-out opposition. In the early stages of the process, someone or some organization might feel that they have something to lose from the creation of a standard, and it becomes their mission to stall or derail the momentum, which is often not hard to do. Standards are fragile ideas in their infancy.

The second big failure mode is on the back end. Once the standard is complete, it must eventually find healthy adoption and implementation in the market in order to sustain and grow. Startups know they need product/market fit in order for their companies to be successful. Standardizers, too, must find market fit in order to survive. There are any number of ways to miss the market: being too late, incompatible implementations, or just plain ignorance. Cargill also highlights the perverse outcome of the standard being used to manipulate and manage the market. While broad adoption can construe this outcome as a success, the network effects can sometimes be a sort of rent-seeking from predatory organizations.

The danger zone for standards is sometimes in the starting but always in the sticking. So goes the third important question in standards making: How will it meet the market and win adoption?

Viewed through this perspective, the booming open source software movement becomes explainable with basic economic logic. The cost of both starting a project and finding adopters has fallen dramatically. Thanks to enabling platforms like Github, there is little friction. Starting and sharing open source software projects has become so straightforward that the discussion has turned almost entirely to the challenge of how to sustain these projects and maintainers.40

Standards in Hardware

Unfortunately, and despite trying, the standards movement happening in software has hardly moved off the screen. There have been valiant attempts to create an open source hardware movement—and the Bristlemouth team were an active part of that in the early days—but it’s remained niche. The thinking goes: take what’s working about open source software, like version control and transparency, and do the same for electronics and mechanical designs.

The Open Source Hardware Association (OSHWA) created a twelve point definition,41 based on a similar definition for open source software, which emphasized these tenants. There was an entire batch of open hardware companies that tried to build businesses through open sharing. Arduino led a revolution in microcontrollers by inviting amateur electrical engineers to prototype every conceivable idea. However, after recently raising a big funding round, they removed any mention of open hardware from their site.42 Who can blame them? Most open hardware companies didn’t succeed. Or when they did, the openness of their hardware played a minimal role. Makerbot, the 3D printing company which grew out of the RepRap open source project, was the most public example of having to hand back their open source bonafides.43 It turned out that supply chains and non-recurring engineering costs didn’t match the ideals or expectations of open source. At any reasonable scale, and with anything beyond hobbyist tools, the math and operational realities couldn’t square.

Like with open source software, the best measure of impact is a simple survey of an engineer. Ask the average mechanical or electrical engineer how open source hardware has impacted their work and you’re likely to get a confused look. Open source hardware, in the OSHWA-defined sense of the term, simply hasn’t caught on.

The open source hardware practitioners have kept the discussion alive for the past decade, through waves of attention and momentum. Progress has been made, especially in addressing the licensing challenges, but there’s still room for improvement. The opportunity to create a modern standards movement in the realm of atoms—as opposed to just bits—remains ripe for interpretation and invention. And there are good examples of enabling standards in the non-digital realm. However, the projects that succeeded didn’t adhere to any open source hardware dogma, but rather they stumbled onto the disruptive standards-making process.

The best example is the recent and dramatic change in satellite designs—from expensive and exquisite systems to cheaper, modular devices that could be built by small teams and companies. The evolutionary leap owes a major debt to the CubeSat standard.44 The CubeSat was created by a pair of professors at Cal Poly and Stanford, Jordi Puig-Suari and Bob Twiggs, who were interested in helping their students get their experiments into space.45 Discouraged by the high price of launch, the two conceived of a simple, modular satellite design—a 10 cm cube—that could work with a common mechanism, the P-Pod launcher, to piggyback on commercial launches. The simple design turned out to be transformative. NASA and commercial providers agreed to carry the small payloads. It started, as Puig-Suari and Twiggs hoped, as a wonderful platform for student experimentation, but it wasn’t long before entrepreneurial minds realized they could take advantage of the spec.46 Within a few years, commercial prototypes were flying, and the CubeSat has become the foundation for a generation of companies, like Planet Labs, that are building large constellations of tiny satellites.47 The design was a critical and underrated contribution to the current space technology renaissance.

The MIDI connector, which shaped and defined a generation of electronic music, is another example. The emergence of digital instruments in the 1980s created a need for an interoperability standard. One of the early makers of synthesizers, Dave Smith, the founder of Sequential Circuits, sought to fill the gap. In 1981, at the AES conference, Smith presented a paper titled “Universal Synthesizer Interface” as a proposal to make sure these new instruments could play nicely together.48 After trying and failing to gather consensus amongst all the largest synthesizer manufacturers, he found a small group of willing partners, including Ikutaru Kakehashi, the founder of the Roland Corporation. Smith told the story in 1997:

We sat down, just a small group of us, and we just said: let’s do it. Forget everybody else, nobody else is interested in it, let’s just do it.49

The group worked together over the next year to develop the spec. At the following year’s show, they demoed their latest synthesizers: Roland’s Jupiter-6 and Sequentials’ Prophet 600. The rest of the market eventually fell in line, even though it wasn’t perfect. There were bugs and missing features that drew complaints and demands for fixes:

Sure, if we had gone through a standards committee and if we had spent 5 years developing MIDI, none of those things would have happened, so we kind of let that sort of thing—all the little details—get fixed in the marketplace.50

The initial rollout storms eventually passed and MIDI became the standard. More importantly, even though they didn’t know it, Smith and Kakehashi had proven the model of disruptive standards-making. It doesn’t require everyone’s buy-in up front—just enough people to get something working, even barely. And if it works, it works.

It should be noted that this type of entrepreneurial spunk can be found inside big companies, too. The history of the Universal Serial Bus (USB) connector illustrates that point. In 1992, Ajay Bhatt, a staff engineer at Intel, was struggling to upgrade and add peripherals to his PC. As an engineer, he was surprised at the difficulty of working between systems and was sure there was a better way. He came into work one day and pitched the concept of a universal interface standard to his managers at Intel—they didn’t bite. But when his managers declined, he “decided to make a lateral move within the company” and got passing enthusiasm from one of the top technical fellows at the company. And he kept building momentum:

I didn’t just rely on him. I started socializing this idea with other groups at Intel. I talked to business guys, and I talked to other technologists, and eventually, I even went out and talked to Microsoft. And we spoke to other people who ultimately became our partners, like Compaq, DEC, IBM, NEC, and others.51

Like all the great standards entrepreneurs, Bhatt was obsessed with overcoming the bottleneck. Even though it happened within a big company, the lesson from the USB is the same as MIDI, the Internet, and the CubeSat: Start with a small group of true believers and gradually work outwards.

Small and simple can quickly evolve into an immense opportunity space. The synthetic biology industry proves that point. In 2003, Tom Knight published a paper lamenting the lack of standards in the assembly of DNA sequences and, more importantly, proposing a remedy. Drawing on his background in computer science as well as the analogy of William Sellers’ 1864 appeal to screw thread standardization, Knight proposed the creation of “biobrick” components which could be used and reused as interchangeable parts. The goal, Knight explained, was “to replace this ad hoc experimental design with a set of standard and reliable engineering mechanisms to remove much of the tedium and surprise during assembly of genetic components into larger systems.”52

Biobricks have become the foundation of the popular International Genetically Engineered Machine (iGEM) competition, and the key ideas of standardization and abstraction have underpinned much of the progress in synthetic biology.53 The Knight paper and the following results are a good reminder that standards are not just patchwork fixes. This way of thinking can be used to create new industries from whole cloth.

It should be noted that all the disruptive standards I’ve mentioned are now all safely housed within consortia—BioBricks Foundation, USB Implementers Forum, Cal Poly CubeSat Laboratory—and are adhering to the established norms of standards-making: committees, working groups, draft releases, etc. Just because they started ad hoc or independent, doesn’t mean they stayed that way. In fact, the mark of a successful disruptive standard is that it does become or fit into a consortium.54 The disruptive model is a new way to kickstart a standard, but the consortia-based model is still the best way to maintain them.

The bigger lesson comes from comparing the success of these disruptive standards against the challenges faced by open source hardware projects. A true standards movement must be rooted in the fundamentals. The idealism can’t outweigh the pragmatism.

Rethinking Ocean Connectivity

Years after the OpenROV lessons, the Bristlemouth project gave us another chance to rethink ocean technology. When the opportunity came, we skipped the open source hardware rhetoric and modeled the effort on the CubeSat example. We had learned through hard-won experience that ocean technology is being hindered by the lack of interoperability standards. It was Stackpole’s insight: “Everybody wants custom shit.”

No matter what we built, our customers and partners always needed some other sensor that we hadn’t accounted for. This was a costly proposition, as every configuration would require software, electrical, and mechanical engineering reviews to ensure compatibility. There was no plug-and-play connectivity between sensors (dissolved oxygen, conductivity, pressure, etc.) and platforms (buoys, robots, ships). A custom need often translates to high costs. Modular components and a simple, universal interface would be a better situation, but the industry hasn’t settled on any one design. USB and the other terrestrial analogs stand no chance against corrosive ocean environments. And the underwater connectors that do exist are too valuable for any of the private manufacturers to consider sharing.

More important than Stackpole’s insight about interoperability, now we were part of a bigger team. OpenROV had merged to create Sofar Ocean Technologies and the company was planning a modular technology architecture that would allow the company to make flexible additions and changes to Sofar’s smart buoy and mooring system. Want to add a dissolved oxygen sensor? No problem. Need temperature sensors at various depths throughout the water column? Easy. The design would allow us to quickly adapt the configuration to suit customer needs. We had identified the right problem and were on a path to solving it for ourselves. That’s when we—notably Evan Shapiro (Sofar’s CTO), Tim Janssen (Sofar’s CEO), Eric Stackpole, and I—started thinking bigger.

We began having informal discussions about turning our internal plans outward— moving from an internal connectivity scheme to trying to influence an industry-wide shift.

It was a lofty goal, and a delicate decision for a startup like us to pursue. But we all agreed that, like the USB and MIDI teams, we had rightly identified the bottleneck that was holding everyone back: connectors. Eric had developed an underwater connector design using a screw mechanism, face-seal O-rings, and separate wire terminals. We had pressure-tested the design down to full ocean depth, and it was simple enough that it could be manufactured for just a few dollars. Creating a standard was just barely possible. If we were going to attempt it, we needed help. We found it in bold partners in government agencies and ocean philanthropy who have supported the early stages of project development—they brought it up to the starting line.

We’re here now. The first Bristlemouth development kits are on their way out the door as of 2023 and the ultimate destiny of the protocol will be seen.

One reality is already certain: it will take time. For hardware, the uptake can be much longer than in open source software. Potential adopters look for signs of a solid foundation before they’re willing to jump in: Is the spec changing? Do the economics make sense? Does it work?

Puag-Suari reflected on the decade-long journey to seeing true adoption of the CubeSat:

When we went back and looked at launch activity, it took about ten years for things to really take off. There were a few launches before that but it wasn’t instantaneous. People needed to gain some comfort that these standards are going to be around for a while.55

Standardizers must have patience baked into their model, as normal economic cycles might not match. But the lengthy process shouldn’t hold us back from trying. Likewise, it shouldn’t stop us from documenting these projects when they’re just making their way into the world. More and better standards are reliant on these types of stories and myth-making.

The message here is not a declaration of success for the Bristlemouth project—that story isn’t finished. Instead, it’s a report back on what we’ve learned so far: engaging in standards-making is complex and profound. The treasure is buried in the process.

An Open Invitation

Thus far, I’ve focused on the what and how of standards: what they are, how they’ve evolved, and how they work. That’s all necessary background to the why, which is relatively simple: standards make the world. They hold an underappreciated and rightful place in our future-making toolbox. In Open Standards and the Digital Age, scholar Andrew Russell traces the history of standards-making from the early standardizers in the United States through the story of the IETF.56 The first eight chapters are the history and the last chapter is his analysis of what it means. He comes to a similar conclusion that Yates and Murphy discovered in their research and the same one we learned through experience: standards complete the circuit. They serve a third and separate function from private organizations and public institutions in shaping society. Russell concludes:

Standard-setting organizations often are studied in terms of their economic function, but they should also be considered in cultural and political terms. I have argued that we should understand these hybrid organizations—and the standards they create—as value-laden expressions of ideology, or ideas about how society should be ordered and how power should be exercised. I also have argued that innovation in network standards is a form of critique; these innovations do not merely challenge what is, they take productive action and make what could be.

Standards are not inherently good or bad, in the same way companies or governments are neutral entities. It’s always case dependent. Like companies and governments, they are simply a mechanism for large-scale human cooperation, and they can be made better with focused intent.

Standards deserve your consideration, whatever your field. Beyond the societal contribution, they offer a unique personal reward. Robin Sloan, writer and media inventor, articulated it well.57 Sloan had grown frustrated with the state of social media and he decided to go further than just quitting or jumping to any of the alternative platforms that have emerged. Instead, he spent time reflecting on the question: “What do you want from the Internet, anyway?”

His answers sent him down a path of uncovering the early discussions of the IETF and culminated with him deciding to build out his own idea for a protocol, which he’s calling Spring ’83. It’s an idea, Sloan hopes, that will inspire other creators and interesting people to follow and learn from each other, without all the nonsense. He made a point to comment on the nature of the work:

Before I go further, I want to say: I recommend this kind of project, this flavor of puzzle, to anyone who feels tangled up by the present state of the Internet. Protocol design is a form of investigation and critique. Even if what I describe below goes absolutely nowhere, I’m very glad to have done this thinking and writing. I found it challenging and energizing.

Sloan tapped into the thrill of standardizing. We touched it, too. There are no promises of riches or even success, but there is a sense of personal power that comes from shaping the tool that shapes the tools. It’s rarefied air: a game taking place above the battlefield of startups and Big Tech that quietly determines the direction of technology and civilization.

Standards-making is foundational work—an unlikely source of hope for all the unfinished revolutions.

Acknowledgements. For the technical aspects of the Bristlemouth project, Evan Shapiro should get most of the credit for vision and leadership. But also Charles Cross and Alvaro Prieto for the architecture contributions. And also Eric Stackpole for the early design and later testing. Or Zack Johnson. Or any number of Sofar engineers who shouldered leadership for the project at various points. From my vantage point, this is truly a team effort. On the management side, Tim Janssen should get the credit for organizing all the parties and rallying them around a common vision. Tim would be quick to acknowledge the crucial role of Oceankind and ONR and DARPA in making this real. There’s also a large portion of credit that should be withheld and given to the early adopters who come next. Joining and building on a project like this is an underrated form of leadership. That’s a funny thing about helping a standard come to life: it requires this exact formula of credit distribution. It’s fully-decentralized heroism. Everyone has to believe. Despite my peripheral role, I absolutely felt fully invested. If this essay seems overly biographic, blame it on my enthusiasm. But make sure to give credit to everyone else.

Thanks to the Summer of Protocols cohort for lively discussion on the ideas. Thanks to the extended Bristlemouth team—Tim Janssen, Evan Shapiro, Eric Stackpole, Charles Cross, Zack Johnson, Jason Thompson, Justin Manley—for years of conversation and engaging work. Thanks to JoAnne Yates for the review and inspiration. The essay was greatly improved by feedback from Venkatesh Rao, Tim Beiko, Timber Schroff, Eric Alston, Rafael Fernandez, JoAnne Yates, Charles Cross, Alan Adams, and Margaret Sinsky.

DAVID LANG is an entrepreneur and writer. He is the executive director of the Experiment Foundation and the co-founder of multiple ocean technology companies. davidtlang.com

1. Michael Roberts, “How Cheap Robots Are Transforming Ocean Exploration,” Outside Magazine, November 2019, https://www.outsideonline.com/outdoor-adventure/exploration-survival/ocean-exploration-research-drones/.

2. “Bristlemouth modules for underwater vehicles,” Bristlemouth Forum, June 10, 2023, https://bristlemouth.discourse.group/t/bristlemouth-modules-for-underwater-vehicles/53.

3. On Credit. For the Bristlemouth project, I deserve none. I was a bit player at every stage, mostly just documenting the progress and doing research when needed. See Acknowledgements for the full story.

4. A likely answer is The Annual Book of ASTM Standards, an 80+ volume catalog of more than 12,800 standards.

5. It’s telling that we refer to corporate battles and standards wars. The implication is there’s more at stake with standards, which is probably true.

6. JoAnne Yates and Craig N. Murphy, Engineering Rules: Global Standard Setting since 1880 (Johns Hopkins University Press, 2021).

7. Yates and Murphy, p. 13.

8. Yates and Murphy, p. 28-34.

9. “Safe Financing Documents,” Y Combinator, https://www.ycombinator.com/documents/.

10. “Seeking (Paid) Case Studies on Standards,” EA Forum, May 26, 2023, https://forum.effectivealtruism.org/posts/idrBxfsHkYeTtpm2q/seeking-paid-case-studies-on-standards.

11. Patrick Greenfield, “Revealed: more than 90% of rainforest carbon offsets by biggest certifier are worthless, analysis shows,” The Guardian, January 18, 2023, https://www.theguardian.com/environment/2023/jan/18/revealed-forest-carbon-offsets-biggest-provider-worthless-verra-aoe.

12. Nature-Deployed Environmental Credit Generating Companies Announce Reykjavik Protocol, Addressing Risks in Carbon Markets, The Reykjavik Protocol, September 19, 2023, https://www.prnewswire.com/news-releases/nature-deployed-environmental-credit-generating-companies-announce-reykjavik-protocol-addressing-risks-in-carbon-markets-301931357.html.

13. Joseph Whitworth, “A Paper on an Uniform System of Screw Threads,” read at the The Institution of Civil Engineers, 1841.

14. Joseph Wickham Roe, “Whitworth,” in English and American Toolbuilders (New Haven: Yale University Press, 1916), p. 98.

15. Whitworth, 1841.

16. Bruce Sinclair, “At the Turn of a Screw: William Sellers, the Franklin Institute, and a Standard American Thread,” Technology and Culture 10, no. 1 (1969): 20–34. https://doi.org/10.2307/3102001.

17. Sinclair, p. 20–34.

18. Yates and Murphy, p. 17.

19. Derek Howse, Greenwich time and the longitude (London: Philip Wilson, 1997), 71–144.

20. Yates and Murphy, p. 52.

21. Marc Levinson, The Box: How the Shipping Container Made the World Smaller and the World Economy Bigger (Princeton, New Jersey: Princeton University Press, 2016).

22. Yates and Murphy, p. 190–193.

23. Yates and Murphy, p. 239.

24. Yates and Murphy do call out the marked difference of the Internet Protocol and the IETF model to the other commercial consortia of the late 20th century, but they stop short of giving it a new name. In later work, Yates explored viewing the variety of standards-making processes as different genres.

25. Stephen Walli, “Understanding Technology Standardization Efforts,” https://stephesblog.blogs.com/papers/stdsprimer.pdf.

26. Carl Shapiro and Hal Varian, “The Art of Standards Wars,” California Management Review, vol. 41, no. 2, Winter 1999, 8–32.

27. Carl Shapiro and Hal Varian, Information Rules: A Strategic Guide to the Network Economy (Boston: Harvard Business School Press, 1999).

28. Rebecca Bellan, “GM follows Ford’s lead and adopts Tesla chargers,” TechCrunch, June 8, 2023 https://techcrunch.com/2023/06/08/gm-follows-fords-lead-and-adopts-tesla-chargers/.

29. Clayton M. Christensen, The Innovator’s Dilemma: When New Technologies Cause Great Firms to Fail (Boston: Harvard Business School Press, 1997).

30. Judy O’Neill, “An Interview with Vinton Cerf,” Charles Babbage Institute, 1990. https://conservancy.umn.edu/bitstream/handle/11299/107214/oh191vgc.pdf.

31. “How are Standards Developed?” IEEE, January 13, 2021, https://standards.ieee.org/beyond-standards/how-standards-are-made/.

32. Yates and Murphy, p. 241–251.

33. David Clark, “A Cloudy Crystal Ball,” Address to the IETF, 1992, https://groups.csail.mit.edu/ana/People/DDC/future_ietf_92.pdf.

34. It hurts to only glance over the story because the origin of the WWW is as unlikely and heroic as they come. In lieu of a longer explanation, I recommend Tim Berners-Lee’s memoir, Weaving the Web.

35. Eric S. Raymond, The Cathedral & The Bazaar: Musings on Linux and Open Source by an Accidental Revolutionary (Sebastopol, CA: O’Reilly Media, 1999).

36. Hammersly, “A Short History of RSS and Atom.”

37. Dave Winer, “Manifesto: Rules for standards-makers,” Scripting News, May 9, 2017, http://scripting.com/2017/05/09/rulesForStandardsmakers.html.

38. “The state of open source software” Octoverse 2022, Github, Accessed July 2023 https://octoverse.github.com/.

39. Carl F. Cargill, “Why Standardization Efforts Fail,” Journal of Electronic Publishing, Volume 14, Issue 1: Standards, Summer 2011, https://doi.org/10.3998/3336451.0014.103

40. Nadia Eghbal, Working in Public: The Making and Maintenance of Open Source Software (San Francisco: Stripe Press, 2020).

41. Open Source Hardware Association, “Open Source Hardware (OSHW) Definition 1.0,” https://www.oshwa.org/definition/.

42. Phil Torrone, “When Open Becomes Opaque: The Changing Face of Open-Source Hardware Companies,” Adafruit Blog, July 12, 2023, https://blog.adafruit.com/2023/07/12/when-open-becomes-opaque-the-changing-face-of-open-source-hardware-companies/.

43. Rick Brown, “Pulling back from open source hardware, MakerBot angers some adherents,” CNET, September 27, 2012, https://www.cnet.com/tech/tech-industry/pulling-back-from-open-source-hardware-makerbot-angers-some-adherents/.

44. Michelle Loxton,”Twenty Years On, The Little CubeSat Is Bigger Than Ever,” Science Friday, June 30, 2023, https://www.sciencefriday.com/segments/cubesat-20-year-anniversary/.

45. Stephen Clark, “A chat with Bob Twiggs, father of the CubeSat,” Spaceflight Now, March 8, 2014, https://www.spaceflightnow.com/news/n1403/08cubesats/.

46. Loxton.

47. Eric Hand, “Thinking Inside the Box: A look at the history of the CubeSat,” Science, April 10, 2015, vol. 348, no. 6231, pp. 176–177 https://doi.org/10.1126/science.348.6231.176.

48. MIDI Association, “MIDI History Chapter 6-MIDI Begins 1981–1983” https://www.midi.org/midi-articles/midi-history-chapter-6-midi-begins-1981-1983

49. Video Archive of Electroacoustic Music, “David Smith on MIDI,” 1997, https://www.youtube.com/watch?v=Jq6_vy4Pcwk.

50. Video Archive of Electroacoustic Music.

51. Joel Johnson, “The unlikely origins of USB, the port that changed everything,” Fast Company, May 29, 2019, https://www.fastcompany.com/3060705/an-oral-history-of-the-usb.

52. Tom Knight, “Idempotent Vector Design for Standard Assembly of Biobricks,” MIT Artificial Intelligence Laboratory, MIT Synthetic Biology Working Group, 2003, http://hdl.handle.net/1721.1/21168.

53. Jason Kelly on Twitter, https://twitter.com/jrkelly/status/1682139988522139648.

54. It’s possible that a disruptive standard becomes a de facto standard that’s maintained by a company with large market power, but I’d argue that’s a less successful outcome given the good examples set by the IETF, WC3, and others.

55. Debra Werner, “Cubesat co-inventor Jordi Puig-Suari sails into the sunset,” Space News, August 7, 2018, https://spacenews.com/cubesat-co-inventor-jordi-puig-suari-sails-into-the-sunset/.

56. Andrew L. Russell, Open Standards and the Digital Age: History, Ideology, and Networks, Cambridge Studies in the Emergence of Global Enterprise (Cambridge: Cambridge University Press, 2014). https://doi.org/10.1017/CBO9781139856553.

57. Robin Sloan, “Specifiying Spring ’83,” June 2022, https://www.robinsloan.com/lab/specifying-spring-83/.

© 2023 Ethereum Foundation. All contributions are the property of their respective authors and are used by Ethereum Foundation under license. All contributions are licensed by their respective authors under CC BY-NC 4.0. After 2026-12-13, all contributions will be licensed by their respective authors under CC BY 4.0. Learn more at: summerofprotocols.com/ccplus-license-2023

Summer of Protocols :: summerofprotocols.com

ISBN-13: 978-1-962872-04-1 print

ISBN-13: 978-1-962872-29-4 epub

Printed in the United States of America

Printing history: December 2023

Inquiries :: hello@summerofprotocols.com

Cover illustration by Midjourney :: prompt engineering by Josh Davis

«standards and measurements, nautical, use orange, white background»